Real-World AI Projects That Get You Hired

50 Expanded AI Project Ideas with Tools, Datasets, and Career Impact

“Recruiters don’t care about courses they care about proof.”

This guide presents 50 practical, real-world AI project ideas, each thoroughly detailed with a clear problem statement, step-by-step build process, recommended tools, relevant datasets, key learning outcomes, and an explanation of how it strengthens your job prospects.

Covering everything from beginner-level NLP tasks to advanced LLM-based agents, these projects are designed to bridge the gap between learning AI concepts and becoming job-ready.

Introduction: why projects win over certificates

Let’s start with a truth that most courses won’t tell you: finishing a Udemy certificate, watching YouTube tutorials for six months, or completing an online bootcamp does not make you hireable on its own. Recruiters and hiring managers at AI companies startups and FAANG alike are not impressed by a list of courses on your resume. What stops them in their tracks is a GitHub repository with a working, deployed project they can click through.

The AI job market in 2026 is uniquely competitive. The barrier to learning has never been lower millions of people are watching the same tutorials, reading the same documentation, and listing the same frameworks on their resumes. The barrier to doing, to building something that actually runs, is where most people stop. That gap between consuming knowledge and applying it is exactly where your career opportunity lives.

Projects serve as proof of multiple compounding skills simultaneously. They show that you can set up a development environment, source and clean a real-world dataset, choose an appropriate model or architecture, write code that does not break in production, debug when things go wrong, and eventually ship something a user can interact with. Each of those steps is a distinct skill. A certificate proves only that you watched someone else execute them.

Beyond the hiring signal, building projects accelerates your learning in ways passive study cannot replicate. When you hit a genuine error at 11pm not a curated exercise error, but a real, cryptic traceback that throws you into the deep end of Stack Overflow and you figure it out, that knowledge becomes permanent. Projects force you into the messy, non-linear reality of engineering work, which is precisely what you will encounter on day one of your new job.

This guide gives you 50 fully expanded AI project ideas. Each one includes a clear problem statement, what you will actually build, the exact tools and libraries to use, where to find the data, a step-by-step implementation outline, what you will learn, and why that specific project resonates with hiring managers. Use this as your project roadmap pick one, build it properly, deploy it, document it, and move to the next.

Everyone Who Codes your career partner

Before you dive in, bookmark the platform that bridges the gap between learning and

landing your first AI role: everyonewhocode.com

Whether you need structured guidance, accountability, or a mentor who has been in

the rooms where hiring decisions are made, Everyone Who Codes has a path for you.

Mock interviews. Resume reviews. 1-on-1 mentorship. Free FAANG prep resources.

Visit: everyonewhocode.com

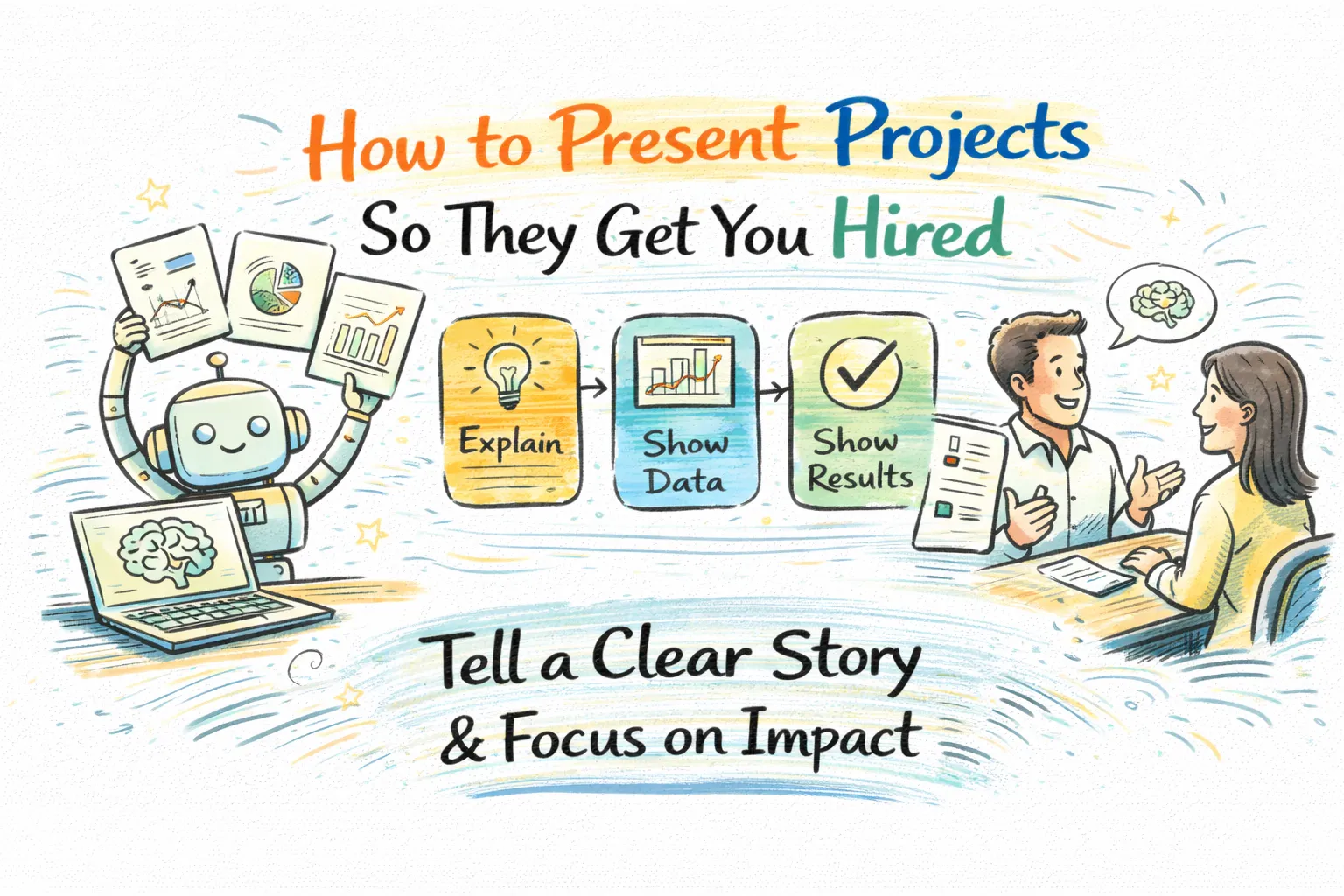

How to present projects so they get you hired

Building a great project is half the equation. The other half is how you package and present it. A recruiter who clicks your GitHub link and finds a single Jupyter notebook with no README, no comments, and no demo will move on in seconds. Here is the framework that consistently converts portfolio views into interview calls.

1. The GitHub repository standard

Every project needs a clean, professional GitHub repository. The README is your project’s front door it should include a one-paragraph overview of what problem you solved and why it matters, the tech stack you used, clear setup and installation instructions so someone can run your code in under five minutes, screenshots or a GIF of the working application, and a link to the live demo if one exists. Write code comments that explain your reasoning, not just what the code does. Structure your folders with intention. Treat your repository as if a senior engineer at your dream company will review it during a hiring decision because they very well might.

2. Deploy a live demo

A live, interactive demo is the single most powerful thing you can add to a portfolio project. Tools like Streamlit, Gradio, and Hugging Face Spaces allow you to deploy a working web interface for free, often in under an hour of work. When a recruiter can type a question into your chatbot, upload an image to your classifier, or generate a prediction with your forecasting model, that hands-on interaction creates a memory they associate with your name. Static notebooks do not create that experience. Make it interactive, make it accessible, and link it prominently in your README.

3. Tell the story in interviews

For every project you list on your resume or portfolio, prepare a structured 90-second narrative: what problem you were solving and why it interested you, what the technical challenge actually was, what approaches you tried that did not work and why, what you ultimately built and how it performed, and what you would do differently if you rebuilt it today. This is the structure interviewers are probing for when they say ‘walk me through a project you worked on.’ They are not looking for a feature list. They are evaluating your engineering judgment, your problem-solving process, and your capacity to reflect and learn.

4. Common portfolio mistakes to avoid

Only doing Titanic and Iris datasets every recruiter has seen hundreds of these. They signal that you have not moved past tutorials. Build projects that solve real or at least realistic problems. Missing README and no context a repository without a README is invisible. Quantity over depth twenty shallow projects are worth far less than three well-built, deployed, documented ones. Skipping the why every project needs a problem statement that explains the real-world relevance. No evaluation metrics show precision, recall, F1-score, confusion matrices, and discuss why you chose those metrics and what trade-offs you made. These small choices signal genuine understanding versus copy-paste implementation.

Want expert feedback on your portfolio before applying?

Book a 1-on-1 mentorship session at Everyone Who Codes and get personalized

guidance from engineers who review portfolios and make hiring decisions.

1-on-1 Mentorship: everyonewhocode.com/services/1-1-tech-mentorship/

Free FAANG Prep: everyonewhocode.com/free-faang-interview-preparation/

Category 01 natural language processing (nlp) projects

Natural Language Processing is one of the most accessible entry points into AI because the raw material text is everywhere. From social media posts and product reviews to legal contracts and medical records, text data is abundant and the problems it unlocks are immediately recognizable to any hiring manager. These ten projects cover the core NLP skill set: text classification, entity recognition, sentiment analysis, sequence modeling, and transformer-based approaches.

Project 01: fake news detector

Misinformation spreads faster in the digital age than at any point in history. AI-powered fake news detection is one of the highest-impact applications of NLP, and it’s a project that resonates instantly with any recruiter who reads the news.

Problem statement: Distinguishing false or misleading news articles from legitimate ones at scale, without human review, is a critical unsolved challenge for media platforms, social networks, and democratic institutions.

What you will build: A binary text classifier that takes a news headline or full article as input and outputs a prediction: real or fake, along with a confidence score. Build a Streamlit interface so users can paste any headline and get an instant result.

Tools & Libraries: Python, BERT or DistilBERT (HuggingFace Transformers), scikit-learn, Pandas, Streamlit

Dataset: LIAR Dataset (Kaggle) or the Fake and Real News Dataset both labeled and publicly available

How it works:

- Load and explore the labeled dataset; understand the class distribution and check for imbalance

- Preprocess text: lowercase, remove HTML, tokenize using the BERT tokenizer

- Fine-tune a pre-trained DistilBERT model for sequence classification on your train split

- Evaluate with precision, recall, F1-score, and a confusion matrix on the held-out test set

- Build a Streamlit interface that accepts user-pasted text and returns a prediction with confidence

What you will learn:

- Text preprocessing and tokenization for transformer models

- Transfer learning and fine-tuning a pre-trained model on a downstream task

- Handling class imbalance in NLP classification datasets

- Model evaluation beyond accuracy: interpreting F1 and confusion matrices

Why this gets you hired: Media-tech, civic-tech, and cybersecurity companies are actively building fake news detection tools. This project shows NLP skill, social awareness, and the ability to build something with real-world stakes a combination that stands out in every interview.

Project 02: Resume parser and ranker

Recruiting is a data problem at scale. Most applicant tracking systems rely on naive keyword matching, producing poor results. An AI-powered resume parser that understands context and relevance is a much better solution.

Problem statement: Recruiters spend enormous time manually screening hundreds of resumes. A model that extracts skills, experience, and education and ranks candidates against a job description can save hours per role.

What you will build: A system that accepts multiple PDF resumes and a job description, extracts structured information using NLP, computes semantic similarity between each resume and the job requirements, and ranks candidates from most to least relevant with a short explanation.

Tools & Libraries: Python, spaCy, NLTK, PyMuPDF or PDFMiner (for PDF parsing), sentence-transformers for semantic similarity, Streamlit

Dataset: Kaggle Resume Dataset contains labeled resumes across job categories. Supplement with real job descriptions from LinkedIn or Indeed.

How it works:

- Build a PDF text extractor using PyMuPDF; handle multi-column layouts and parse section headers

- Use spaCy’s Named Entity Recognition to extract skills, degrees, job titles, and years of experience

- Encode both the job description and each resume using a sentence-transformer model (e.g., all-MiniLM-L6-v2)

- Compute cosine similarity between each resume embedding and the job description embedding

- Rank and display results with matched skills highlighted and a compatibility score per candidate

What you will learn:

- PDF parsing and unstructured text extraction

- Named Entity Recognition (NER) using spaCy

- Semantic similarity with sentence transformers and cosine distance

- Building practical HR-tech pipelines with real document inputs

Why this gets you hired: HR technology is a multi-billion dollar market and AI-powered hiring tools are its fastest growing segment. Building this project proves you can combine NLP with a real business process the kind of applied thinking that companies value enormously.

Project 03: Sentiment predictor for product reviews

Every online retailer, SaaS product, and service business receives thousands of text reviews. Understanding the emotional tone of that feedback at scale without reading every review manually is a foundational business intelligence problem.

Problem statement: Manually reading thousands of reviews to understand customer sentiment is impractical. An automated system that classifies reviews as positive, negative, or neutral and surfaces trends is immediately useful to any business.

What you will build: A sentiment classifier that takes raw review text and outputs a sentiment label and confidence score. Extend it with a dashboard showing sentiment distribution over time, most frequent positive and negative keywords, and examples of each class.

Tools & Libraries: Python, HuggingFace Transformers (RoBERTa or VADER for lighter use), scikit-learn, Pandas, Matplotlib, Streamlit

Dataset: Amazon Product Reviews dataset (Kaggle) or IMDb Movie Reviews dataset both widely used and well-labeled

How it works:

- Load and explore the dataset; examine the distribution across sentiment classes

- Preprocess: strip HTML, handle contractions, tokenize with the model’s tokenizer

- Fine-tune a RoBERTa model for 3-class sentiment classification on the training set

- Evaluate with per-class precision, recall, and F1; examine misclassified examples to understand failure modes

- Build a Streamlit dashboard showing sentiment trends, word clouds per class, and a live classification input

What you will learn:

- Multi-class text classification with transformer models

- Analyzing model errors and identifying patterns in misclassification

- Data visualization and dashboard design with Streamlit and Matplotlib

- Understanding how sentiment models are used in real business intelligence contexts

Why this gets you hired: Sentiment analysis is one of the most deployed NLP applications in industry. Building a polished version with a real dashboard not just a model notebook demonstrates the full product thinking that distinguishes junior developers from engineers companies want to hire.

Project 04: Autocorrect and autocomplete tool

Autocorrect is something every person uses every day, yet most developers have never built one. Implementing it from scratch reveals the elegance of probabilistic language models and gives you deep intuition for how language prediction works.

Problem statement: Typing errors are universal and constant. An autocorrect system must identify likely misspellings and suggest the most contextually appropriate correction a harder problem than it first appears.

What you will build: A Python-based autocorrect tool that suggests the most likely correction for any misspelled word, using edit distance for candidate generation and a language model for context-aware ranking. Extend it with an autocomplete feature that predicts the next word given a partial sentence.

Tools & Libraries: Python, NLTK (for tokenization and corpus access), TextBlob (for baseline), BERT (for context-aware correction), Streamlit

Dataset: Wikipedia corpus (available via NLTK) for language model training; custom sentence test set for evaluation

How it works:

- Implement a baseline spell checker using minimum edit distance (Levenshtein distance) to generate candidate corrections

- Build a unigram and bigram language model from a large text corpus to rank candidates by probability

- Upgrade to a BERT-based masked language model that predicts the most likely correction in context

- Add an autocomplete feature: given partial input, predict the top 3 most likely next words

- Deploy via Streamlit with real-time word prediction as the user types

What you will learn:

- Edit distance algorithms and how they are used in NLP systems

- n-gram language models and probability-based word prediction

- How masked language modeling works in BERT and how to use it for word prediction

- Real-time interactive NLP application development

Why this gets you hired: This project is a perfect conversation starter in interviews because it covers multiple layers of NLP algorithms, probability, and deep learning in one coherent system. It shows intellectual range and the ability to build something deceptively simple but technically rich.

Project 05: Social media spam detector

Bot accounts and spam comments contaminate social media platforms, spreading misinformation, promoting scams, and degrading the user experience. Automated detection is essential at any scale.

Problem statement: Identifying spam comments from legitimate ones on platforms like YouTube or Instagram requires understanding not just keywords but context, repetition, and suspicious patterns a natural NLP classification problem.

What you will build: A spam detection classifier trained on labeled YouTube comment data that identifies whether a new comment is spam or legitimate. Add a simulation feature where users can input a comment and see a prediction with reasoning.

Tools & Libraries: Python, BERT or ALBERT (HuggingFace), scikit-learn, NLTK, Pandas, Streamlit

Dataset: YouTube Spam Collection Dataset (UCI Machine Learning Repository) 5 different YouTube video comment datasets labeled as spam or ham

How it works:

- Load and explore the dataset; note the class imbalance between spam and legitimate comments

- Preprocess: lowercase, remove URLs and special characters, tokenize

- Train a TF-IDF + Logistic Regression baseline; then fine-tune a BERT or ALBERT model to compare

- Evaluate both models; analyze false positives (legitimate comments flagged as spam) carefully

- Build a Streamlit interface where users paste comments and get classification results with confidence

What you will learn:

- Handling class imbalance with oversampling or weighted loss functions

- Comparing traditional ML (TF-IDF + Logistic Regression) to modern transformer approaches

- Understanding why false positives matter more than false negatives in moderation contexts

- Practical experience with text classification pipeline deployment

Why this gets you hired: Content moderation is a high-priority concern at every major platform. This project demonstrates NLP skill applied to a clearly real and recognizable problem, with easy-to-understand business impact exactly what non-technical interviewers need to understand your work.

Project 06: Fake product review identifier

Fraudulent reviews manipulate purchasing decisions, erode consumer trust, and constitute illegal deceptive trade practices. AI-powered detection is a growing need across e-commerce platforms.

Problem statement: Businesses and bad actors pay for fake positive reviews. Platforms need to detect and remove these automatically. The challenge is that well-crafted fake reviews can read exactly like genuine ones only subtle statistical and linguistic patterns give them away.

What you will build: A classifier that distinguishes genuine hotel or product reviews from deceptive ones, using both surface-level features (review length, punctuation patterns) and deep semantic features from pre-trained language models.

Tools & Libraries: Python, BERT, RoBERTa, XLNet (HuggingFace), scikit-learn, Pandas, NLTK

Dataset: Deceptive Opinion Spam Corpus (Cornell University) 1600 labeled hotel reviews, balanced between genuine and deceptive

How it works:

- Load the dataset; perform exploratory analysis examining length, vocabulary richness, and punctuation patterns between classes

- Extract hand-crafted features: average sentence length, ratio of first-person pronouns, readability scores

- Fine-tune RoBERTa on the labeled dataset for binary classification

- Combine hand-crafted features with model embeddings using a meta-classifier for improved accuracy

- Evaluate with cross-validation; report precision and recall with attention to false negative rate (deceptive reviews classified as genuine)

What you will learn:

- Feature engineering for text classification beyond bag-of-words

- Combining traditional ML features with deep learning embeddings in a hybrid classifier

- Understanding deception detection as a specialized NLP problem

- Model interpretability: using LIME or SHAP to explain which words drive predictions

Why this gets you hired: E-commerce platforms spend millions fighting fake reviews. This project combines NLP skill with a domain that every recruiter instantly understands and cares about. The hybrid feature engineering approach also demonstrates machine learning depth that purely deep-learning-only projects lack.

Project 07: Text summarization engine

The volume of text produced daily is staggering news articles, research papers, legal documents, earnings reports. AI-powered summarization is one of the most commercially valuable NLP applications in existence.

Problem statement: Humans cannot read everything relevant to their work or interests. A summarization system that condenses long documents into accurate, coherent summaries without losing critical information is genuinely difficult to build well.

What you will build: An abstractive text summarization tool that takes a long article or document as input and generates a concise summary in new language (not just extracted sentences). Build a Streamlit or Gradio interface where users can paste text or upload a document.

Tools & Libraries: Python, HuggingFace Transformers (BART or T5 for abstractive summarization), Pegasus model, Pandas, Streamlit or Gradio

Dataset: CNN/DailyMail News Summarization Dataset (HuggingFace Datasets) one of the standard benchmarks for summarization with source articles and reference summaries

How it works:

- Load and explore the CNN/DailyMail dataset; understand the structure of source articles and reference summaries

- Fine-tune a BART-base or T5-small model on the dataset using the HuggingFace Trainer API

- Evaluate generated summaries using ROUGE scores (ROUGE-1, ROUGE-2, ROUGE-L) against reference summaries

- Implement both extractive (sentence selection) and abstractive (model generation) summarization; let users compare outputs

- Deploy with a clean interface where users can adjust summary length and see a quality score

What you will learn:

- Sequence-to-sequence models and encoder-decoder architectures for generation tasks

- ROUGE metric evaluation for summarization quality measurement

- Fine-tuning generative models using HuggingFace Trainer

- How abstractive summarization differs from extractive approaches and when to use each

Why this gets you hired: Text summarization is embedded in enterprise software, legal tech, media tools, and research platforms. Demonstrating that you can fine-tune a generative model not just call an API signals senior-level NLP competency that fewer than 10% of applicants can claim.

Project 08: Hate speech detection system

Online hate speech causes measurable psychological harm, contributes to real-world violence, and represents one of the most difficult content moderation challenges facing internet platforms today.

Problem statement: Classifying text as hateful versus non-hateful is complicated by context-dependence, cultural variation, sarcasm, and the fact that benign language can become hateful depending on how it is used. It requires a model that understands context, not just keywords.

What you will build: A multi-class classifier that categorizes social media posts as: hate speech, offensive language, or neutral. Build an analysis dashboard showing the distribution of classifications across a dataset and flag high-confidence hate speech for review.

Tools & Libraries: Python, HuggingFace Transformers (BERT or RoBERTa), scikit-learn, Pandas, Matplotlib, Streamlit

Dataset: Hate Speech and Offensive Language Dataset (Davidson et al.) 25,000 labeled tweets across three classes. Available on Kaggle and GitHub.

How it works:

- Load and explore the dataset; examine the severe class imbalance (most tweets are offensive but not hate speech)

- Preprocess tweets: remove handles, URLs, and hashtags but preserve slang and informal language patterns

- Implement a weighted cross-entropy loss function to handle class imbalance during fine-tuning

- Fine-tune RoBERTa for 3-class classification; evaluate per-class F1 with special attention to hate speech recall

- Build a moderation simulation dashboard where users upload a batch of text and see Category breakdowns

What you will learn:

- Handling severe class imbalance with weighted loss and oversampling

- The difference between offensive language and hate speech a real-world annotation challenge

- Responsible AI considerations in content moderation: false positive consequences

- Batch inference and dataset-level analysis beyond single-sample prediction

Why this gets you hired: Every social platform, comment system, and community forum needs content moderation. This project demonstrates NLP skill in a high-stakes, socially important context something that ethical AI teams and trust-and-safety roles specifically look for.

Project 09: Language translator application

Neural machine translation was one of the transformative breakthroughs of modern deep learning. Building your own translation system gives you direct experience with sequence-to-sequence architectures and the attention mechanism that powers them.

Problem statement: Translating text between languages while preserving meaning, tone, and nuance requires a model that understands both the source and target language deeply a quintessential sequence-to-sequence problem.

What you will build: A translation application built on a pre-trained transformer model that translates text between English and at least one other language (Hindi, French, or Spanish). Build a Streamlit interface with source and target language selectors.

Tools & Libraries: Python, HuggingFace Transformers (Helsinki-NLP MarianMT or Facebook’s NLLB-200 model), GluonNLP, PyTorch, Streamlit

Dataset: IIT Bombay English-Hindi Parallel Corpus for English-Hindi; WMT datasets for European languages all available via HuggingFace Datasets

How it works:

- Load a pre-trained MarianMT model for your chosen language pair from HuggingFace Model Hub

- Implement the translation pipeline: tokenize source text, run model inference, decode output tokens to target language

- Fine-tune the model on a domain-specific parallel corpus (e.g., technical documentation or legal text) for improved domain accuracy

- Evaluate translation quality using BLEU score on a held-out test set

- Deploy with a Streamlit interface that supports multiple language pairs and shows BLEU score for each translation

What you will learn:

- Transformer encoder-decoder architecture for sequence-to-sequence tasks

- Tokenization for multilingual models: byte-pair encoding and SentencePiece

- BLEU score evaluation and its limitations for assessing translation quality

- Domain adaptation: fine-tuning a general model for specialized text

Why this gets you hired: Language technology roles are growing rapidly, particularly in global companies serving multilingual markets. This project shows you understand the architecture powering tools used by billions of people a level of depth that impresses both technical and non-technical interviewers.

Project 10: Next sentence and text completion predictor

Predicting what comes next the next word, the next sentence, the next paragraph is the core task on which modern large language models are trained. Building a text completion model from the ground up gives you foundational insight into how GPT-style systems work.

Problem statement: Understanding and predicting coherent continuations of text requires modeling long-range dependencies in language a challenge that stumped researchers for decades before the transformer architecture solved it.

What you will build: A text completion tool that accepts a partial sentence or paragraph and generates multiple plausible continuations. Show different decoding strategies: greedy decoding, beam search, and top-k sampling. Let users compare the outputs.

Tools & Libraries: Python, HuggingFace Transformers (GPT-2 or a small Llama model), PyTorch, NLTK, Streamlit

Dataset: WikiText-103 dataset for language model training; pre-trained GPT-2 weights for fine-tuning from HuggingFace

How it works:

- Load a pre-trained GPT-2 model and experiment with text generation using the pipeline API to understand baseline outputs

- Fine-tune GPT-2 on a domain-specific dataset (e.g., coding documentation, legal text, or news articles) to create a specialized completion model

- Implement three decoding strategies: greedy decoding, beam search (try beams of 3, 5, and 10), and top-k sampling with different temperature settings

- Evaluate outputs qualitatively by human assessment and quantitatively using perplexity on a test set

- Build an interactive Streamlit app where users type partial text, choose a decoding strategy, and compare up to three generated completions side by side

What you will learn:

- How autoregressive language models generate text token by token

- The trade-off between decoding strategies: quality vs diversity vs speed

- Fine-tuning causal language models on domain-specific text

- Perplexity as a language model evaluation metric and what it measures

Why this gets you hired: Every company working with LLMs needs people who understand what is happening under the hood not just how to call an API. This project proves that understanding. It is one of the most impressive beginner-to-intermediate NLP projects you can show because it directly mirrors the architecture of products worth billions of dollars.

Category 02 Computer vision projects

Computer vision projects have an enormous advantage in a portfolio: they are instantly demo-able. A live webcam application, a working image classifier, or a real-time object detector creates a powerful impression in interviews that is almost impossible to achieve with a text-only project. These ten projects cover the computer vision skill stack from object detection to medical imaging to multi-modal systems.

Project 11: Real-Time Object Detection System

Object detection is the foundational capability behind self-driving cars, surveillance systems, retail automation, and robotics. Building a working object detector is one of the clearest demonstrations of computer vision skill.

Problem statement: Detecting and classifying multiple objects within a single image identifying what they are and where they are requires a model that simultaneously learns features for classification and regression for bounding box prediction.

What you will build: An object detection system using a pre-trained SSD or YOLOv8 model that can identify and label objects in still images and live webcam video. Build a Streamlit interface with image upload and an optional real-time webcam mode.

Tools & Libraries: Python, TensorFlow or PyTorch, OpenCV, YOLOv8 (Ultralytics) or SSD MobileNet, Streamlit

Dataset: Kaggle Open Images Object Detection Dataset (80 object categories); COCO Dataset for pre-trained model validation

How it works:

- Load a pre-trained YOLOv8 model and run inference on sample images to understand the output format: bounding boxes, class labels, confidence scores

- Fine-tune the model on a custom subset of Open Images for a specific domain (e.g., only vehicles, only kitchen objects)

- Implement non-maximum suppression to handle overlapping bounding boxes

- Add real-time video detection using OpenCV’s webcam capture and overlay bounding boxes on the live feed

- Deploy as a Streamlit app supporting both image upload and webcam modes with adjustable confidence threshold

What you will learn:

- How single-stage and two-stage object detectors differ architecturally

- Anchor boxes, bounding box regression, and intersection over union (IoU)

- Fine-tuning a detection model on a custom object class

- Real-time inference optimization and frame rate considerations

Why this gets you hired: Object detection is everywhere in production AI systems. YOLOv8 is the industry standard for real-time detection right now, and knowing how to fine-tune and deploy it is a concrete, specific skill that many job descriptions explicitly list.

Project 12: Animal species image classifier

Image classification is the entry point to computer vision, and building a robust multi-class classifier using transfer learning teaches you the core workflow that powers much more complex systems.

Problem statement: Identifying species from photographs is valuable in wildlife conservation, veterinary applications, and educational technology. The challenge is distinguishing visually similar species and handling variation in pose, lighting, and background.

What you will build: A 10-class animal species classifier using transfer learning on VGG-16 or ResNet50. Deploy as a web app where users can upload any animal photo and get a prediction with confidence scores for all classes.

Tools & Libraries: Python, TensorFlow or PyTorch, Keras, VGG-16 or ResNet50 (pre-trained on ImageNet), OpenCV, Streamlit or Gradio

Dataset: Animals-10 Dataset (Kaggle) 10 animal classes including dog, cat, horse, spider, butterfly, chicken, sheep, cow, squirrel, elephant with 26,000+ images total

How it works:

- Load the Animals-10 dataset; split into train, validation, and test sets with stratified sampling

- Apply data augmentation: random horizontal flip, rotation up to 20 degrees, random crop, and color jitter

- Load VGG-16 with pre-trained ImageNet weights; freeze all convolutional layers and replace the final classification head with a 10-class dense layer

- Train only the new head for 10 epochs; then unfreeze the last 2 convolutional blocks and fine-tune end-to-end at a low learning rate

- Evaluate with a classification report and confusion matrix; visualize which images were misclassified and why

What you will learn:

- Transfer learning workflow: freeze, train head, unfreeze, fine-tune

- Data augmentation strategies and why they improve generalization

- The intuition behind convolutional feature hierarchies: what each layer detects

- How to interpret a confusion matrix for multi-class image classification

Why this gets you hired: Image classification is the ‘hello world’ of computer vision, but doing it right with proper augmentation, fine-tuning strategy, and honest evaluation demonstrates competency that many juniors lack. This project is foundational for any CV-focused role.

Project 13: Pneumonia detection from chest x-rays

Medical AI is one of the highest-impact and fastest-growing application areas in artificial intelligence. Building a model that detects disease from medical imaging touches skills that healthcare companies, startups, and research institutions are actively hiring for.

Problem statement: Radiologists are in short supply globally, and missed pneumonia diagnoses cause preventable deaths. An AI system that assists in triaging X-ray images flagging likely pneumonia cases for urgent review can have genuine life-saving impact.

What you will build: A 3-class image classifier (normal, bacterial pneumonia, viral pneumonia) trained on chest X-ray images using ResNet50 with FastAI. Deploy as a clinical simulation app where a doctor can upload an X-ray and receive a classification with confidence and a Grad-CAM visualization showing which regions drove the prediction.

Tools & Libraries: Python, FastAI, ResNet50 or ResNet101, PyTorch, Grad-CAM, Streamlit

Dataset: Kaggle Chest X-Ray Images (Pneumonia) Dataset 5,863 X-ray images across 3 labeled classes collected from Guangzhou Women and Children’s Medical Center

How it works:

- Load the dataset with FastAI’s ImageDataLoaders; apply appropriate medical image preprocessing including normalization

- Handle class imbalance using oversampling of the minority class and weighted random sampling in the DataLoader

- Fine-tune a ResNet50 model using FastAI’s one-cycle learning rate policy for efficient training

- Implement Grad-CAM (Gradient-weighted Class Activation Maps) to generate visual explanations of model predictions

- Build a Streamlit app where users upload an X-ray and see the predicted class, confidence, and the Grad-CAM heatmap overlay showing the model’s attention regions

What you will learn:

- Medical image preprocessing requirements: DICOM format handling and normalization

- FastAI’s high-level training API and how it abstracts PyTorch complexity

- Class imbalance handling strategies in image datasets

- Model explainability using Grad-CAM critical for medical AI applications

Why this gets you hired: Medical AI is one of the most exciting and highest-paying specializations in the field. Building a project in this domain especially with explainability built in immediately differentiates you from candidates who have only worked on toy datasets. Healthcare AI companies see this project and know you have thought carefully about deployment in high-stakes settings.

Project 14: Real-time face mask detection

During and after the COVID-19 pandemic, automated mask detection became a required feature in public safety systems at airports, hospitals, and commercial venues. It is an excellent real-world application of computer vision.

Problem statement: Manually checking mask compliance in high-traffic environments is impractical and prone to error. An automated system using a standard camera can detect and alert in real time at a fraction of the cost.

What you will build: A binary classifier (mask / no mask) built on MobileNet V2 that runs in real time on a webcam feed. Extend it to draw bounding boxes around each detected face and overlay a color-coded mask status indicator.

Tools & Libraries: Python, TensorFlow, Keras, MobileNet V2 (pre-trained on ImageNet), OpenCV, Streamlit

Dataset: Face Mask Dataset (GitHub / Kaggle) approximately 7,553 images in two classes: with masks and without masks

How it works:

- Load and preprocess the dataset: resize all images to 224×224, normalize pixel values, and augment with flips and brightness variation

- Fine-tune MobileNet V2 by replacing its classification head with a binary output layer; use binary cross-entropy loss

- Add a face detection step using OpenCV’s Haar Cascade or DNN face detector to locate faces in the frame before running classification

- Implement real-time inference: capture webcam frames, detect faces, classify each face, and overlay a green (mask) or red (no mask) bounding box

- Deploy as a Streamlit app supporting both image upload and live webcam modes

What you will learn:

- Why MobileNet V2 is preferred for edge and real-time applications over heavier architectures

- Combining a detection model (face detector) with a classification model in a pipeline

- Real-time inference optimization: how to maintain frame rate while running classification

- Practical deployment of computer vision in safety-critical settings

Why this gets you hired: This project is immediately recognizable, visually impressive as a demo, and demonstrates the ability to build a complete pipeline detection plus classification plus real-time output. The live demo element alone makes it one of the most attention-grabbing portfolio pieces you can build.

Project 15: Age and gender detection from facial images

Demographic inference from images is used in digital advertising, access control, personalized retail experiences, and security systems. Building this from scratch reveals how deep learning models handle inherently ambiguous visual prediction tasks.

Problem statement: Estimating age from a photograph is an inherently imprecise task even for humans. A model must learn subtle facial features skin texture, facial structure, wrinkles and map them to an age range rather than a single value.

What you will build: A dual-output model that simultaneously predicts the age group and gender of a person from a facial photograph. Use OpenCV for real-time face detection and run the age/gender prediction on each detected face.

Tools & Libraries: Python, OpenCV, Caffe Deep Neural Networks (DNN module), PyTorch or TensorFlow, Streamlit

Dataset: UTKFace Dataset (Kaggle) 20,000+ facial images labeled with age, gender, and ethnicity spanning ages 0 to 116

How it works:

- Load the UTKFace dataset; parse file names to extract age and gender labels

- For the age task, experiment with both regression (predict exact age) and classification (predict age group: child, young adult, adult, senior)

- Load OpenCV’s pre-trained Caffe-based face detector; extract face regions from images

- Train a CNN with two output heads: one for age prediction and one for gender classification; use a combined loss function

- Build a Streamlit app where users upload a photo or use a webcam and see bounding boxes with predicted age range and gender overlaid

What you will learn:

- Multi-output model design: single network with multiple task heads

- The choice between regression and classification for continuous labels like age

- Using OpenCV’s DNN module to load Caffe models a common pattern in production CV systems

- Ethical considerations around demographic inference models

Why this gets you hired: Age and gender detection projects show up in computer vision roles frequently. More importantly, the multi-output model architecture introduces an important advanced concept. Combined with the real-time demo, this project demonstrates both depth and breadth in CV.

Project 16: Hand gesture recognition application

Gesture recognition is the technology behind touchless interfaces, sign language translation apps, gaming controllers, and accessibility tools. It is a rich computer vision problem that combines detection, tracking, and classification.

Problem statement: Interpreting human gestures from video in real time requires accurately localizing the hand, extracting meaningful features from its configuration, and classifying the gesture quickly enough for interactive use.

What you will build: A gesture recognition web application that identifies 20 distinct hand gestures from a live webcam feed or uploaded image. Use MediaPipe Hands for landmark detection and a custom classifier for gesture recognition.

Tools & Libraries: Python, MediaPipe (Google’s hand landmark detection), VGG-16 or a custom CNN, OpenCV, TensorFlow, Streamlit

Dataset: Kaggle Hand Gesture Recognition Database 20,000 labeled gesture images across 20 categories

How it works:

- Use MediaPipe Hands to extract 21 hand landmark coordinates from each video frame in real time

- Engineer features from the 21 landmarks: relative angles between fingers, distances between key points, and hand orientation

- Train a lightweight classifier (Random Forest, SVM, or small MLP) on the extracted landmark features rather than raw pixels this is much faster and more robust

- Compare this landmark-based approach with a CNN trained directly on cropped hand images; measure accuracy and inference speed

- Deploy a real-time Streamlit app where users perform gestures on camera and see the recognized gesture label with confidence

What you will learn:

- MediaPipe as a production-ready pose and hand estimation framework

- Feature engineering from keypoints a powerful alternative to end-to-end CNNs

- The trade-off between landmark-based and image-based approaches for gesture recognition

- Building interactive real-time applications with webcam input

Why this gets you hired: Gesture recognition is used in accessibility tech, AR/VR, and human-computer interaction. The landmark-based approach you use here is exactly what production systems use it shows you think about efficiency and practicality, not just model accuracy.

Project 17: Car license plate recognition system

License plate recognition (LPR) is a mature but continuously evolving computer vision application used in traffic enforcement, parking management, toll collection, and border control. Building a working end-to-end system is an impressive portfolio achievement.

Problem statement: Accurately reading license plates in real-world conditions varying angles, lighting, speeds, and partial occlusion requires a robust detection step followed by precise character recognition.

What you will build: An end-to-end LPR pipeline that detects a vehicle, localizes the license plate, and reads the characters. Test it on video footage and output the recognized plate numbers with timestamps.

Tools & Libraries: Python, YOLOv8 (for plate detection), OpenCV (for preprocessing), EasyOCR or Tesseract (for character recognition), PyTorch, Streamlit

Dataset: UFPR-ALPR Dataset or custom video footage; alternatively, the Open Images Vehicle dataset for detection fine-tuning

How it works:

- Use a pre-trained YOLOv8 model to detect vehicles and then specifically detect license plate regions within the cropped vehicle images

- Preprocess detected plate regions: deskew, denoise, apply adaptive thresholding to improve OCR accuracy

- Apply EasyOCR to extract characters from the preprocessed plate image; compare with Tesseract on the same plates

- Handle edge cases: partially obscured plates, wet/dirty plates, angled shots

- Build a pipeline that processes video frame by frame, tracks unique plates, and outputs a CSV of detected plates with timestamps

What you will learn:

- Multi-stage computer vision pipelines: detection then recognition

- OCR technology and text recognition from natural images

- Video processing with OpenCV: frame extraction, tracking, deduplication

- Building production-ready CV pipelines that handle real-world edge cases

Why this gets you hired: LPR is used in every city in the world for parking and traffic enforcement. Building a working system end-to-end especially one that handles video input demonstrates serious computer vision engineering ability. The multi-stage pipeline architecture is exactly what production CV engineers build.

Project 18: Image caption generator

Image captioning generating natural language descriptions of visual content is one of the most beautiful intersections of computer vision and NLP. It underpins accessibility features, content indexing, and visual question answering.

Problem statement: Generating an accurate, fluent description of an image requires the model to identify objects, understand their spatial relationships, and produce grammatically correct language a true multi-modal challenge.

What you will build: An image captioning system that takes any photograph as input and generates a natural language description. Use a CNN encoder to extract visual features and a transformer decoder to generate captions. Compare against a pre-trained BLIP model.

Tools & Libraries: Python, PyTorch, CNN (ResNet50 for encoding), Transformer decoder or LSTM, HuggingFace (BLIP model), Streamlit or Gradio

Dataset: MS COCO Captions Dataset 330,000 images each with 5 human-generated reference captions, the standard benchmark for image captioning

How it works:

- Implement the classic CNN-LSTM encoder-decoder architecture: ResNet50 extracts a feature vector, LSTM decoder generates words autoregressively

- Train on a subset of COCO; evaluate using BLEU-4 and CIDEr scores against reference captions

- Load a pre-trained BLIP (Bootstrapping Language-Image Pre-training) model from HuggingFace and compare its caption quality to your trained model

- Add an image-to-question feature: given an image, generate a factual question about its content using the same framework

- Build a Gradio interface where users upload images and see generated captions from both your model and BLIP, with quality scores

What you will learn:

- Encoder-decoder architectures for multi-modal generation

- BLEU and CIDEr evaluation metrics for caption quality

- How vision-language pre-training (BLIP, CLIP) advances the state of the art

- Beam search for caption generation and how it differs from greedy decoding

Why this gets you hired: Image captioning is used in accessibility features across every major platform. This project is visually stunning as a demo and demonstrates multi-modal AI competency a skill set in extremely high demand as companies build systems that understand both images and text.

Project 19: Sign language recognition app

Sign language recognition is one of the most socially impactful applications of computer vision it can fundamentally improve communication access for millions of people with hearing disabilities.

Problem statement: American Sign Language (ASL) uses distinct handshapes and movements to represent words. A system that interprets these gestures and converts them to text in real time enables fluid communication between ASL users and those who do not know sign language.

What you will build: A real-time ASL alphabet and word recognition system using MediaPipe for hand landmark detection and a custom classifier trained on sign language gesture data. Build a mobile-friendly interface for real-world use.

Tools & Libraries: Python, MediaPipe Hands, TensorFlow, Inception 3D model (for video-based word recognition), OpenCV, Streamlit

Dataset: World-Level American Sign Language (WLASL) Video Dataset 2000 classes of ASL signs with video clips; also the ASL Alphabet Image Dataset on Kaggle for static sign recognition

How it works:

- Start with static sign recognition using MediaPipe hand landmarks and a Random Forest or MLP classifier on the landmark coordinates

- Extend to dynamic signs (words that require movement) using a sequence of landmark frames as input to an LSTM or Transformer

- Load the Inception 3D model for video classification on the WLASL dataset for full word recognition

- Implement a text-to-speech output: recognized signs are converted to spoken words using pyttsx3

- Deploy as a real-time Streamlit app with webcam support, live sign detection, and a running transcript of recognized signs

What you will learn:

- Building classification systems on human pose landmarks rather than raw pixels

- Temporal sequence modeling for dynamic gesture recognition using LSTM

- Accessibility-driven AI product design

- The Inception 3D architecture for video understanding tasks

Why this gets you hired: Sign language recognition sits at the intersection of high technical difficulty and clear humanitarian impact two qualities that stand out in portfolios. This project tells a compelling story in interviews and demonstrates multi-faceted CV skill: static recognition, temporal modeling, and real-time deployment.

Project 20: Violence detection in video streams

Automated violence detection in video is used in surveillance systems, content moderation for video platforms, and public safety applications. It is a real-world computer vision challenge with clear deployment context.

Problem statement: Video content platforms receive thousands of hours of uploaded video every minute. Manually reviewing flagged content for violence is expensive and psychologically harmful for reviewers. An automated pre-screening system can dramatically reduce that burden.

What you will build: A violence detection classifier that processes video clips and classifies them as violent or non-violent, with a confidence score and a timestamp indicating when violent activity occurs. Build a content moderation simulation interface.

Tools & Libraries: Python, TensorFlow, VGG-16 or ResNet50 (for frame-level features), LSTM (for temporal aggregation across frames), OpenCV, Streamlit

Dataset: Violent Flows Dataset and Hockey Fight Videos Dataset both publicly available for academic use with binary violence/no-violence labels

How it works:

- Extract frames from video clips at 2-5 frames per second using OpenCV; save as image sequences

- Extract visual features from each frame using a pre-trained VGG-16 feature extractor (remove the final classification layer)

- Feed the sequence of per-frame feature vectors into an LSTM to model temporal dynamics and make a clip-level prediction

- Evaluate on both datasets; compute precision and recall with attention to false negatives (missed violence)

- Build a Streamlit app where users upload a video and see a timeline visualization with violence probability scores per second

What you will learn:

- Video understanding as a spatial-temporal problem: combining CNN features with recurrent sequence modeling

- Frame extraction and video preprocessing with OpenCV

- Why clip-level classification requires temporal context beyond individual frames

- Content moderation system design: the cost of false negatives vs false positives

Why this gets you hired: Video AI is one of the most technically demanding and commercially valuable areas of computer vision. Building a working video classification system particularly one with a temporal component demonstrates skills that most CV candidates never reach.

Category 03 Classic ML & predictive modeling

Before deep learning became dominant, machine learning meant regression, decision trees, ensemble methods, and time series models. These techniques remain the most deployed form of AI in production systems in finance, healthcare, retail, and logistics. Every AI practitioner needs a strong foundation here.

Project 21: Stock price predictor using LSTM

Time series forecasting of financial data is one of the most studied and commercially valuable applications of machine learning. LSTM networks are particularly well-suited to this task because of their ability to learn long-range temporal dependencies.

Problem statement: Stock prices are influenced by hundreds of interacting variables historical patterns, market sentiment, macroeconomic indicators making accurate prediction genuinely difficult. Most naive approaches fail; a well-engineered LSTM can capture patterns simpler models miss.

What you will build: A stock price forecasting system that predicts the next 7-30 days of closing prices for a given stock using historical OHLCV (Open, High, Low, Close, Volume) data plus technical indicators as features.

Tools & Libraries: Python, TensorFlow or Keras, NumPy, Pandas, yfinance (Yahoo Finance API), Matplotlib, Streamlit

Dataset: Yahoo Finance API via the yfinance Python library free, real-time access to historical OHLCV data for any publicly traded stock

How it works:

- Download 5 years of historical data for a chosen stock using yfinance; compute technical indicators: RSI, EMA, MACD, Bollinger Bands using the ta library

- Normalize all features using MinMaxScaler; create input sequences of 60 days to predict the next 7 days

- Build an LSTM model with 2 stacked LSTM layers and dropout regularization; train on 80% of data

- Evaluate on the held-out 20% using RMSE and MAE; plot predicted vs actual closing prices

- Build a Streamlit dashboard where users enter a ticker symbol, choose a prediction horizon, and see the forecast with historical context

What you will learn:

- Time series data preprocessing: windowing, normalization, and train/test split strategy

- LSTM architecture and why it outperforms vanilla RNNs for long sequences

- Technical indicator engineering for financial data

- Evaluating regression models for forecasting: RMSE, MAE, and directional accuracy

Why this gets you hired: Every fintech company, trading desk, and investment platform is exploring ML-based forecasting. This project is one of the most recognizable and discussed in finance-adjacent AI roles. Build it well and you have a natural conversation anchor for any interview in the space.

Project 22: Credit card fraud detection

Financial fraud costs the global economy over 30 billion dollars annually. Machine learning fraud detection systems are among the most commercially critical AI deployments in production, running billions of transactions through models every day.

Problem statement: Fraud detection is a classic imbalanced classification problem: fraudulent transactions typically represent less than 0.2% of all transactions. Standard accuracy metrics are meaningless; the engineering challenge is finding fraud without overwhelming legitimate transaction false positives.

What you will build: A fraud detection classifier trained on real credit card transaction data that identifies fraudulent transactions while minimizing false positives. Implement and compare multiple approaches to class imbalance and evaluate rigorously using the right metrics.

Tools & Libraries: Python, scikit-learn, imbalanced-learn (SMOTE, NearMiss), XGBoost, Pandas, Matplotlib, Seaborn

Dataset: European Cardholders Credit Card Fraud Dataset (Kaggle / ULB) 284,807 transactions with 492 frauds (0.172%); features are PCA-transformed for anonymity

How it works:

- Load and explore the dataset; visualize the extreme class imbalance using count plots and proportion calculations

- Implement three imbalance handling strategies: no resampling with class weights, SMOTE oversampling, and NearMiss undersampling train a separate model for each

- Train Logistic Regression, Random Forest, and XGBoost classifiers within a cross-validation pipeline for each resampling strategy

- Evaluate using precision-recall curves and ROC-AUC; explain why accuracy is the wrong metric and what the precision-recall trade-off means in this business context

- Build a simulation interface where users can see how different confidence thresholds change the false positive rate and fraud capture rate

What you will learn:

- Class imbalance as a fundamental ML engineering challenge and multiple strategies to address it

- Why precision-recall curves are more informative than ROC curves for imbalanced data

- Business cost framing: the cost of false positives (declined legitimate transactions) vs false negatives (missed fraud)

- Comparing multiple models and resampling strategies in a rigorous cross-validation framework

Why this gets you hired: Fraud detection is one of the highest-value, highest-stakes ML deployments in existence. Every bank, fintech, payment processor, and e-commerce company has a fraud team. This project shows you understand the full complexity not just model accuracy and that you think about ML in terms of business impact.

Project 23: Employee salary prediction

Salary transparency and fair compensation are critical issues in HR and labor economics. AI-powered salary prediction tools help both companies benchmark compensation and job seekers understand their market value.

Problem statement: Predicting appropriate salary ranges for a role requires modeling the interaction of multiple factors: years of experience, skills, industry, location, company size, and seniority level. It is a regression problem with interesting feature engineering challenges.

What you will build: A salary prediction model trained from scratch using PyTorch that predicts expected compensation given a job title, years of experience, location, and top skills. Build a user-facing calculator interface.

Tools & Libraries: Python, PyTorch, Pandas, scikit-learn (for preprocessing), Matplotlib, Streamlit

Dataset: Glassdoor or Levels.fyi dataset (Kaggle) compensation data including base salary, stock, and bonus across companies, roles, and locations

How it works:

- Load and explore the salary dataset; analyze distributions by role, location, and experience level using box plots and violin plots

- Engineer features: encode categorical variables (job title, location, company) using target encoding, parse years of experience as numerical, one-hot encode skills

- Build and train a neural network with PyTorch: 3 hidden layers, ReLU activation, BatchNorm, and dropout regularization; minimize MSE loss

- Compare against a Gradient Boosting baseline (XGBoost); evaluate both models using RMSE and mean absolute percentage error (MAPE)

- Build a Streamlit salary calculator where users enter their profile and see a predicted salary range with a confidence interval

What you will learn:

- Feature engineering for tabular data with mixed categorical and numerical inputs

- Building neural networks in PyTorch from scratch rather than using high-level APIs

- Regression evaluation metrics and how to interpret MAPE in salary prediction context

- Model comparison methodology: when deep learning beats gradient boosting and when it does not

Why this gets you hired: HR analytics is a growing field and salary equity is a pressing corporate priority. This project shows both technical depth (PyTorch from scratch) and practical relevance. The calculator interface turns it into a product, not just a notebook and products get you hired.

Project 24: Loan eligibility predictor

Automated loan eligibility assessment is one of the most impactful applications of machine learning in finance, potentially improving access to credit while reducing human bias in lending decisions.

Problem statement: Predicting whether a loan applicant should be approved involves assessing risk based on financial and demographic data a classification problem with significant regulatory and ethical dimensions.

What you will build: A binary loan eligibility classifier that takes applicant information as input and predicts approval or rejection with a confidence score and a feature importance explanation showing which factors drove the decision.

Tools & Libraries: Python, scikit-learn (Logistic Regression, Random Forest, Gradient Boosting), SHAP (for explainability), Pandas, Streamlit

Dataset: Loan Prediction Dataset (Kaggle / Analytics Vidhya) 614 loan applications with features including income, credit history, loan amount, and property area

How it works:

- Load and explore the dataset; handle missing values with domain-appropriate strategies (median imputation for income, mode for categorical features)

- Engineer features: debt-to-income ratio, loan amount relative to applicant income, total household income

- Train and compare Logistic Regression, Random Forest, and Gradient Boosting; tune hyperparameters using GridSearchCV

- Apply SHAP (SHapley Additive exPlanations) to explain individual predictions: show which features pushed the decision toward approval or rejection

- Build a Streamlit form where applicants enter their information and see a prediction with a SHAP waterfall chart explaining the result

What you will learn:

- Feature engineering for financial risk assessment

- Comparing classical ML classifiers with rigorous cross-validation

- Model explainability using SHAP critical for regulated industries like banking

- Ethical AI considerations: detecting and mitigating bias in lending models

Why this gets you hired: Lending decisions affect millions of lives. This project is relevant to fintech, banking, and insurance roles, and the SHAP explainability component is a major differentiator it shows you understand that model interpretability matters in regulated industries, not just model accuracy.

Project 25: Car price prediction system

Used car pricing is a highly practical regression problem with a massive market. AI-based vehicle valuation tools are used by dealers, insurance companies, and consumers to determine fair market value.

Problem statement: Car prices depend on a large number of interacting factors: make, model, year, mileage, condition, fuel type, transmission, and regional demand. Modeling these interactions accurately requires thoughtful feature engineering.

What you will build: A car price prediction model built with PyTorch that takes vehicle specifications as input and outputs an estimated market price. Build a comparison tool where users can see how changing specs (e.g., adding 20,000 km) impacts the predicted value.

Tools & Libraries: Python, PyTorch, Pandas, NumPy, Matplotlib, scikit-learn (preprocessing), Streamlit

Dataset: Car Price Prediction Dataset (Kaggle) thousands of used car listings with price and vehicle specification features

How it works:

- Load and clean the dataset: handle outliers in price using IQR filtering, parse mileage from string format, handle missing values

- Engineer features: vehicle age from year and current date, price per kilometer, brand tier encoding based on average brand price

- Convert the DataFrame to PyTorch Tensors; build a neural network with 4 hidden layers and batch normalization

- Train with learning rate scheduling (ReduceLROnPlateau); track training and validation loss curves to diagnose overfitting

- Build a ‘what if’ Streamlit calculator where users specify a car and see the predicted price change as they modify individual features

What you will learn:

- Data cleaning and outlier handling for real-world pricing datasets

- PyTorch data pipeline: Dataset, DataLoader, and tensor operations

- Learning rate scheduling and regularization techniques for tabular deep learning

- Building interactive ‘what if’ prediction interfaces that demonstrate business value

Why this gets you hired: Automotive pricing is a concrete, relatable domain that any interviewer understands instantly. The PyTorch-from-scratch implementation shows technical depth, and the interactive what-if feature turns this from an academic exercise into a product demo.

Project 26: Earthquake prediction model

Earthquake prediction remains one of science’s great unsolved challenges. Building a machine learning model on seismic data does not solve the problem, but it is a powerful exercise in time series analysis, feature engineering, and working with geospatial data.

Problem statement: Historical seismic data contains patterns of magnitude, depth, frequency, and location that can inform probabilistic predictions of future seismic activity. While accurate individual earthquake prediction is not yet possible, forecasting the probability of significant events in a region over a time window has research value.

What you will build: A probabilistic earthquake occurrence model trained on USGS seismic data that forecasts the likelihood of a magnitude 5.0+ earthquake in a given region over the next 30 days. Visualize historical earthquake distribution on an interactive world map.

Tools & Libraries: Python, ANN (PyTorch or TensorFlow), Pandas, NumPy, Matplotlib, Folium (for geospatial visualization), Streamlit

Dataset: USGS Earthquake Catalog (available via USGS API) or the SOCR Earthquake Dataset complete historical earthquake records with magnitude, depth, latitude, longitude, and timestamp

How it works:

- Download historical seismic data; explore distribution of magnitudes, depths, and geographic clustering

- Engineer temporal and spatial features: earthquake frequency in the last 7/30/90 days per region, average magnitude trend, depth distribution

- Build an Artificial Neural Network (ANN) with 3 hidden layers to predict the probability of a significant event in the next 30 days for a grid of geographic regions

- Evaluate using proper probabilistic evaluation metrics: Brier score and reliability diagrams

- Deploy an interactive Folium map showing historical earthquakes and model predictions per region overlaid as a heat map

What you will learn:

- Geospatial feature engineering: creating grid-based spatial aggregations

- Probabilistic prediction and calibration evaluation using Brier scores

- Working with time series data that has irregular temporal spacing

- Geospatial visualization with Folium and interactive map deployment

Why this gets you hired: This project demonstrates that you can work with real scientific data, engineer domain-specific features, and build probabilistic models skills valued in research, climate tech, and public safety AI roles. The geospatial visualization makes the demo genuinely impressive.

Project 27: Wine quality predictor

Quality prediction from physicochemical measurements is a standard regression problem with direct industry applications in food science, agriculture, and quality control. Wine provides a well-understood, clean dataset that allows deep exploration of the modeling workflow.

Problem statement: Wine quality is assessed by expert tasters on a scale of 1-10, but that process is expensive and subjective. A model that predicts quality from measurable chemical properties pH, acidity, alcohol content, sulphates can automate quality screening.

What you will build: A wine quality predictor that takes 11 physicochemical measurements as input and predicts a quality score. Frame it as both a regression problem (predict exact score) and a classification problem (low, medium, high quality). Compare the approaches.

Tools & Libraries: Python, scikit-learn (Random Forest, SVM, XGBoost), Pandas, Seaborn, Matplotlib, Streamlit

Dataset: UCI Wine Quality Dataset 6,497 red and white wine samples with 11 physicochemical features and expert quality ratings from 3 to 9

How it works:

- Load and explore both red and white wine datasets; compare feature distributions between them using pair plots and correlation heatmaps

- Identify the most predictive features for quality using a correlation matrix and feature importance from a preliminary Random Forest

- Frame as a classification problem: bin quality scores into low (3-5), medium (6-7), and high (8-9) categories to handle class imbalance in extreme scores

- Train Random Forest, SVM, and XGBoost classifiers; tune with RandomizedSearchCV; compare performance with a stratified 10-fold cross-validation

- Build a Streamlit quality analyzer where users slide input feature values and see real-time quality prediction changes

What you will learn:

- Exploratory data analysis with correlation analysis and feature importance

- The choice between regression and classification framing for ordinal targets

- Hyperparameter tuning with RandomizedSearchCV vs GridSearchCV: when each is appropriate

- Interactive slider-based prediction interfaces that make model behavior intuitive

Why this gets you hired: Wine quality prediction is a classic portfolio project, but executing it well with dual problem framing, proper cross-validation, and an interactive interface makes it far more impressive than the usual notebook submission. It shows methodological thinking, not just code execution.

Project 28: IPL cricket score predictor

Sports analytics is a growing AI application domain where predictive models inform team strategy, commentary, and betting markets. IPL score prediction is a time series problem with domain-specific feature engineering challenges.

Problem statement: Predicting the final score of an IPL cricket match at any point in the game requires modeling complex dynamics: current run rate, wickets remaining, pitch conditions, batting lineup, and historical team performance.

What you will build: A live score prediction model that takes the current game state (overs bowled, runs scored, wickets fallen, venue, teams, batting players) and predicts the likely final score with a confidence range.

Tools & Libraries: Python, LSTM or Gradient Boosting (XGBoost/LightGBM), Pandas, NumPy, Deep Learning, Streamlit

Dataset: Kaggle IPL Dataset complete ball-by-ball and match-level data for all IPL seasons from 2008 onwards

How it works:

- Load ball-by-ball data; engineer features at each over: current run rate, projected run rate, wickets in hand, boundary percentage, team average chase score at this venue

- Create target labels: final innings total for each game

- Train two models: an LSTM on the sequential over-by-over features, and an XGBoost regressor on aggregated features; compare RMSE

- Evaluate specifically on overs 1-6, 7-15, and 16-20 separately to understand where the model performs best

- Build a live game simulator in Streamlit: users input over-by-over updates and watch the score prediction update in real time

What you will learn:

- Domain-specific feature engineering for sports time series

- Comparing sequential models (LSTM) vs tree-based models (XGBoost) on the same time series task

- Evaluating regression models at different stages of a time series (early vs late prediction accuracy)

- Building a live simulation interface that communicates model uncertainty

Why this gets you hired: Sports analytics is a growing AI specialization, and IPL is one of the most data-rich sports ecosystems in India. This project is immediately engaging for any Indian tech audience and demonstrates time series modeling, feature engineering, and interactive deployment in one package.

Project 29: Spam email classifier

Email spam filtering was one of the earliest practical machine learning systems deployed at scale. Building one from scratch teaches fundamental NLP classification skills that apply across many text-based AI problems.

Problem statement: Spam emails range from obvious to sophisticated. A model must learn to distinguish spam from legitimate email without generating false positives that filter out important messages a classic precision-recall trade-off.

What you will build: A spam classifier trained on labeled email data that achieves high precision (few legitimate emails flagged as spam) while maintaining strong recall. Compare a TF-IDF baseline against a transformer model to understand the improvement.

Tools & Libraries: Python, TensorFlow, scikit-learn (Naive Bayes, TF-IDF, Logistic Regression), NLTK, Pandas, Streamlit

Dataset: Enron Email Dataset or Kaggle SMS Spam Collection Dataset well-curated labeled datasets with spam and ham categories

How it works:

- Load and preprocess the dataset: strip HTML tags, remove stopwords, stem or lemmatize words using NLTK

- Implement a TF-IDF + Multinomial Naive Bayes baseline a classic and highly effective spam filter

- Fine-tune a DistilBERT model for binary classification; compare against the Naive Bayes baseline on precision-recall metrics

- Analyze false positives carefully: which legitimate emails get misclassified as spam, and why?

- Build a Gmail-style inbox simulation in Streamlit where email text can be submitted and classified with an explanation of suspicious features

What you will learn:

- TF-IDF as a feature representation method and how it captures term importance

- Naive Bayes text classification: why it remains competitive despite its simplicity

- The precision-recall trade-off in binary classification and how to tune the threshold

- Comparing classical NLP baselines against transformer models: when the complexity is justified

Why this gets you hired: Email spam detection demonstrates breadth: you cover classical ML, NLP preprocessing, and modern transformers in one project. The comparison between approaches shows mature engineering judgment you do not just reach for the most complex tool, you evaluate trade-offs. That thinking is what companies want.

Project 30: Customer churn prediction

Retaining existing customers is significantly cheaper than acquiring new ones. Customer churn prediction models are among the most universally deployed ML systems across telecommunications, SaaS, banking, and subscription businesses.

Problem statement: Identifying which customers are likely to cancel their subscription or switch providers before they actually leave allows companies to intervene with retention offers. The challenge is identifying subtle behavioral signals that predict departure.

What you will build: A churn prediction model that takes customer usage data and account information as input and outputs a churn probability score with key risk factors highlighted for each customer. Build a retention campaign prioritization interface.

Tools & Libraries: Python, scikit-learn (Logistic Regression, Random Forest, XGBoost), SHAP, Pandas, Streamlit

Dataset: Telco Customer Churn Dataset (Kaggle / IBM Sample Data) 7,000+ telecom customers with usage features, contract details, and churn labels

How it works:

- Load and explore the dataset; analyze churn rates by contract type, tenure, and payment method using grouped visualizations

- Engineer behavioral features: change in monthly spend, number of support calls in last 3 months, service utilization ratio

- Train XGBoost with proper class weighting; tune using cross-validated grid search; evaluate with precision-recall AUC

- Apply SHAP to generate per-customer explanations: for each at-risk customer, show the top 3 factors pushing toward churn

- Build a Streamlit dashboard showing the top 100 at-risk customers ranked by churn probability with their individual SHAP explanations

What you will learn:

- Behavioral feature engineering from customer event data

- Business framing of ML problems: connecting model output to actionable retention strategy

- SHAP explanations for customer-level model interpretability

- Dashboard design for business analytics: how to make ML outputs actionable for non-technical stakeholders

Why this gets you hired: Churn prediction is deployed at virtually every subscription business in the world. This project speaks to SaaS, fintech, telecom, and e-commerce roles. The SHAP-powered per-customer explanation dashboard is the kind of thinking that product and business teams value it turns a model into a decision support tool.

Category 04 Recommendation systems

Recommendation systems are the invisible engine behind the most engaging consumer products in the world. Netflix, Spotify, YouTube, Amazon, TikTok their core product experience is a recommendation algorithm. Understanding how to build them is foundational for any AI role at a consumer-facing company.

Project 31: Movie recommendation engine